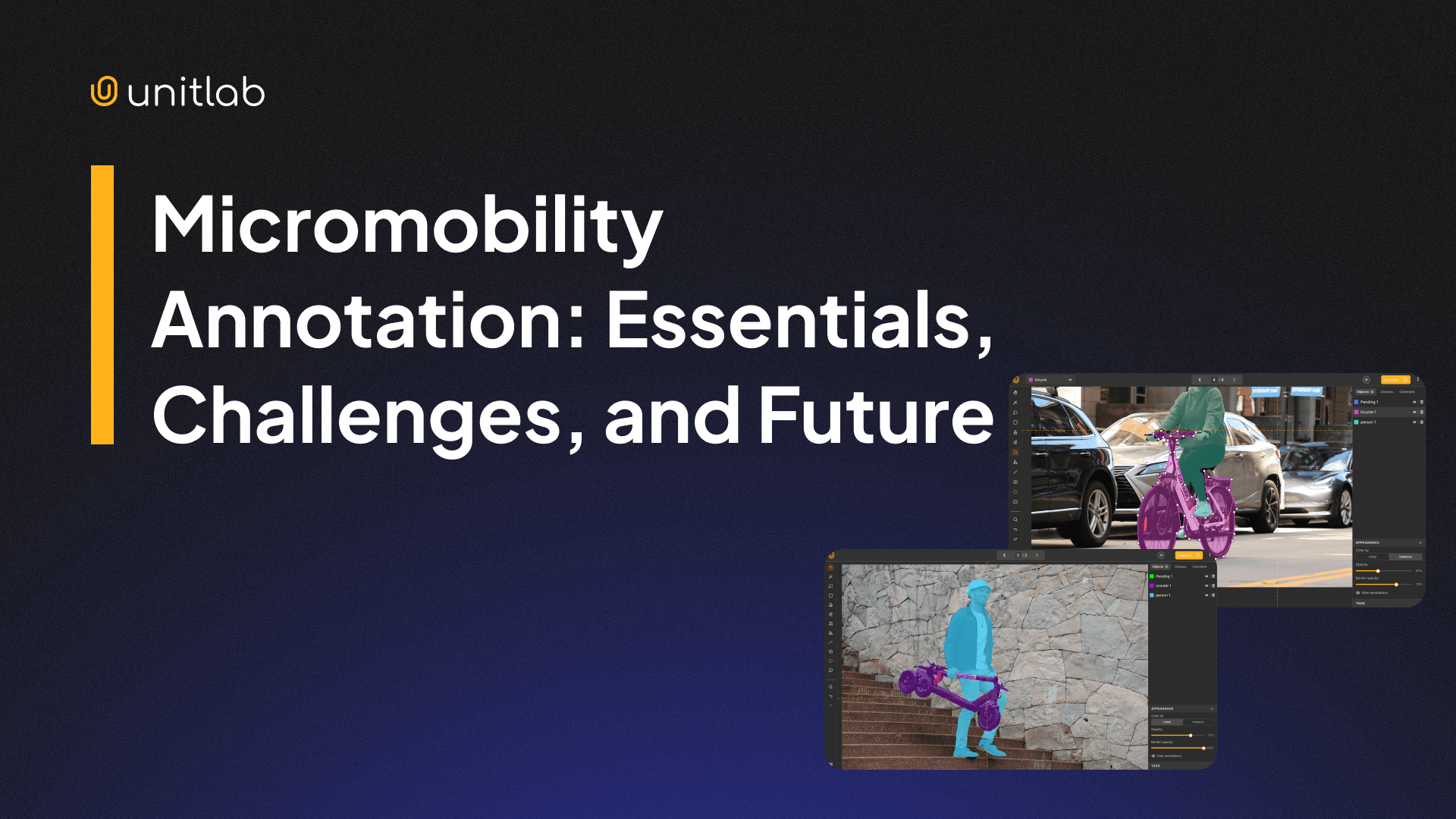

If you live in a city, you have already seen the shift: E-scooters weave between cars at intersections; E-bikes occupy lanes that used to belong exclusively to cyclists; Shared bicycles clutter sidewalks and parking zones. Micromobility has moved from novelty to infrastructure and is moving fast.

For AI and computer vision engineers, that shift creates a concrete problem. Perception systems trained on cars, trucks, buses, and pedestrians were not designed for small, fast, geometrically irregular vehicles operating in unpredictable mixed-traffic environments. The datasets are inadequate because the annotation strategies were insufficient. As a result, most models underperform.

This post covers what micromobility annotation actually involves, where the technical difficulty lies, and how to build annotation pipelines that produce reliable perception models for this emerging vehicle class.

What is Micromobility?

Micromobility refers to lightweight, low-speed personal vehicles used for short urban trips, such as electric scooters, e-bikes, shared bicycles, electric skateboards, and similar compact modes. In dense cities, they routinely substitute for car trips under five kilometers, filling the gap between walking and public transit.

The scale of adoption makes this commercially and technically significant. According to McKinsey, the global micromobility market is projected to exceed $340 billion by 2030. Modern cities are redesigning infrastructure around these vehicles: dedicated bike lanes, scooter-sharing systems, and mixed-traffic corridors are becoming standard features of urban planning.

That infrastructure shift changes the visual environment that computer perception systems must interpret. A traffic camera or autonomous vehicle sensor that handles cars and pedestrians confidently will encounter e-scooters, cargo bikes, and riders in configurations it has never been trained on. Closing that gap starts with annotation.

What Is Micromobility Annotation?

Micromobility annotation is the structured labeling of images, video frames, or multimodal sensor data to represent micromobility vehicles and their behaviors in urban environments. Drawing a bounding box around a scooter is the floor, not the ceiling.

Effective micromobility annotation captures a richer set of attributes:

- Vehicle class: e-scooter, e-bike, cargo bike, skateboard, and so on

- Rider presence and pose

- Motion state: moving, parked, or fallen

- Lane or path context

- Interactions with other traffic participants

These labels train object detection, tracking, segmentation, and behavior prediction models that are specifically calibrated for micromobility-heavy scenes.

The granularity matter because:

- Separating the rider from the vehicle improves behavior modeling.

- Segmenting structural components like wheels or handlebars supports pose estimation and fine-grained geometry understanding.

- Tracking identity across video frames is essential because these vehicles accelerate and change direction quickly.

High-quality annotation is what makes any of that possible.

Annotation Types for Micromobility Vision

Different modeling objectives call for different annotation formats. Mature micromobility datasets and models typically combine several.

Bounding boxes are the starting point for object detection, providing coarse localization and class labels at scale. The limitation is geometric: thin or irregular objects like scooters produce boxes with significant background area, which reduces spatial precision and intersection-over-union (IoU) scores during model evaluation.

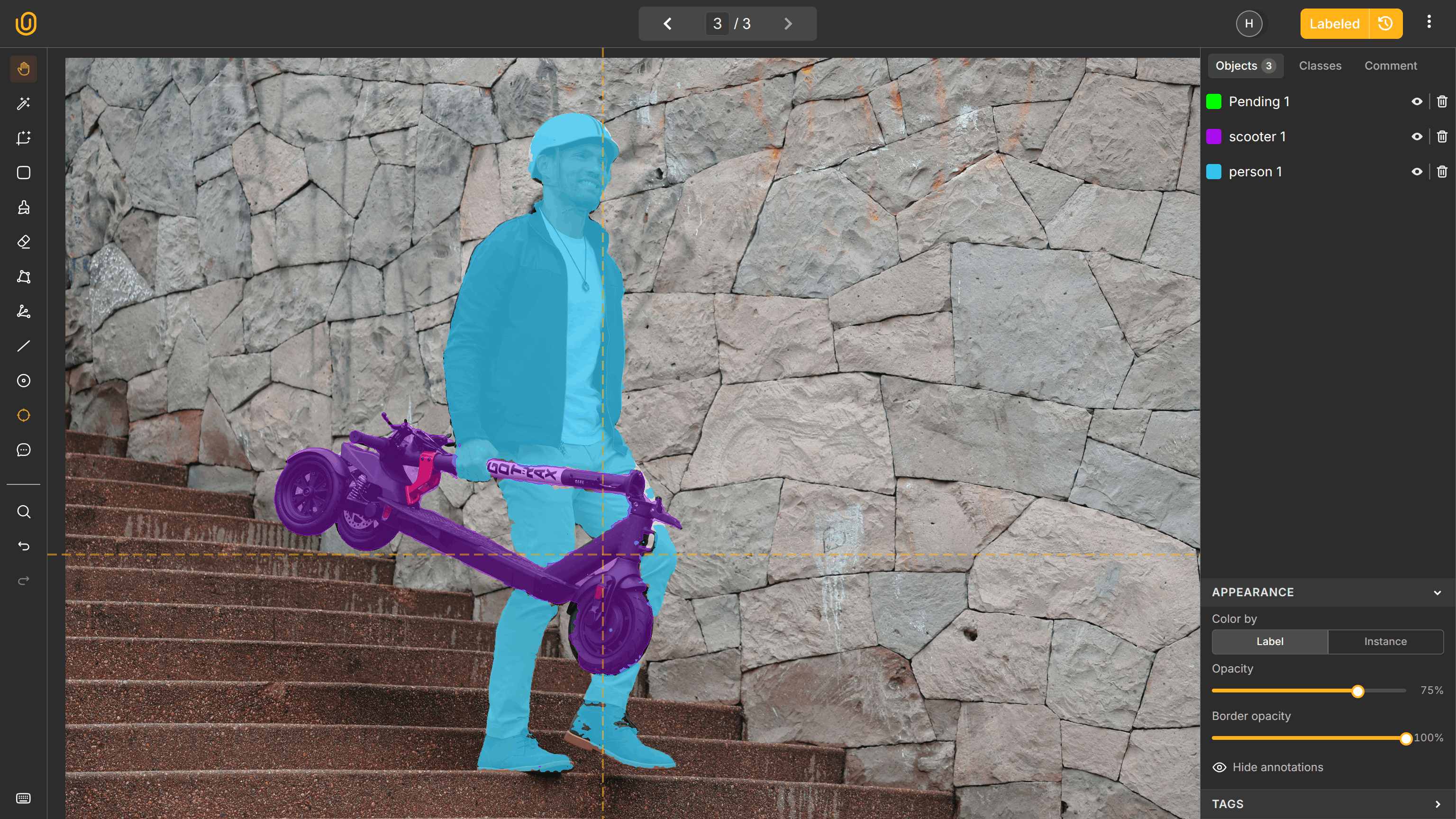

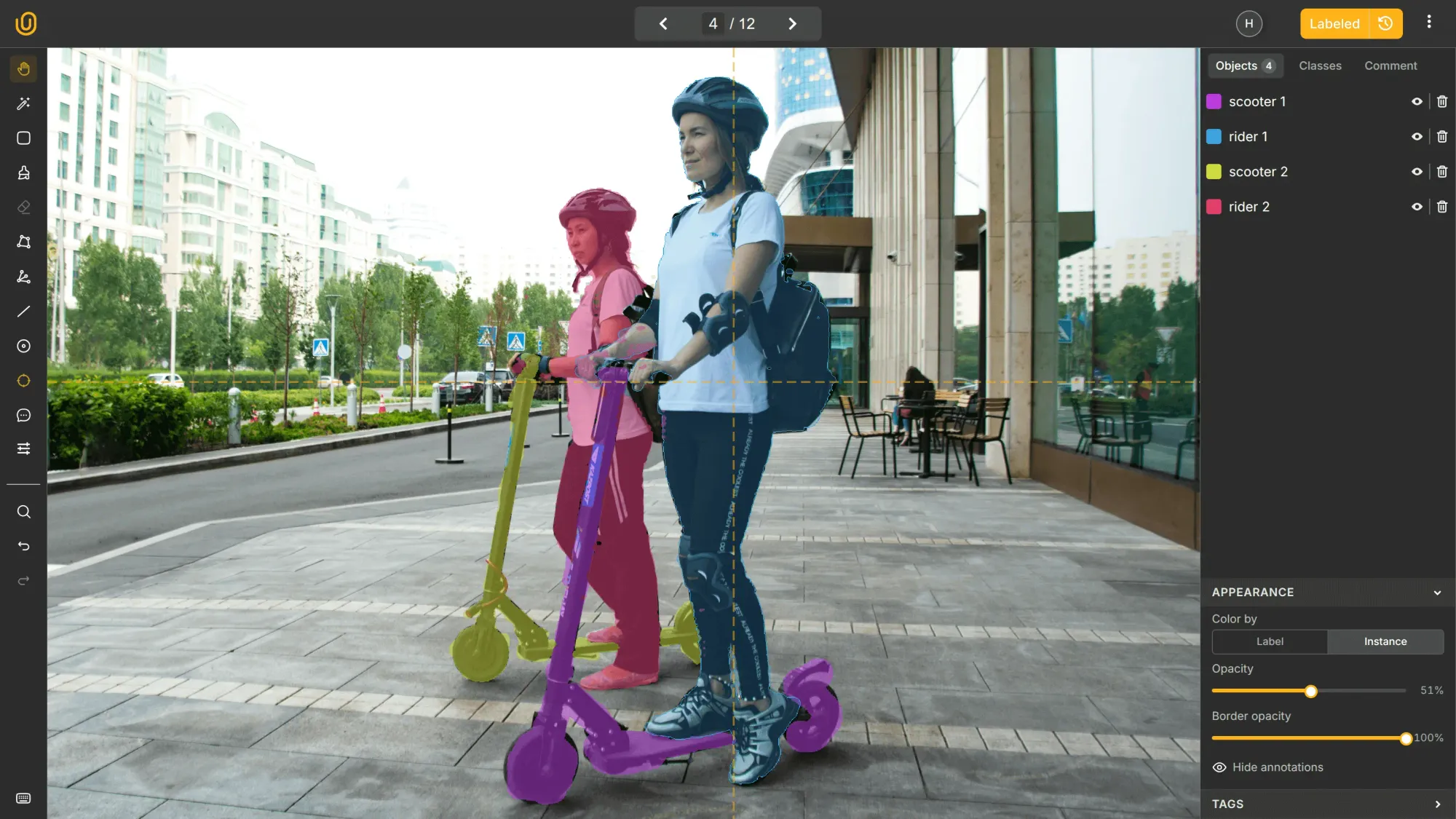

Instance segmentation addresses this issue by outlining exact object contours. It improves performance in crowded scenes with heavy overlap and occlusion, where bounding boxes alone cause models to conflate adjacent objects.

Semantic segmentation operates at the infrastructure level, not object-level. Labeling bike lanes, sidewalks, curbs, and road surfaces allows models to reason about spatial constraints and safe paths, not just the vehicles moving through them.

Keypoint annotation enables rider pose estimation. Head orientation, hand placement, and body posture carry predictive signal for risk assessment and intention modeling, particularly relevant for ADAS and safety systems.

3D bounding boxes and LiDAR annotation are increasingly standard in autonomous driving pipelines. Reliable three-dimensional localization is critical for collision avoidance in scenarios where depth estimation from 2D RGB images alone is insufficient.

LiDAR Dataset and Annotation

Video annotation adds temporal context across frames, enabling trajectory prediction, motion modeling, and consistent identity tracking. For fast-moving vehicles with erratic behavior, the temporal dimension is not optional.

Each format serves a specific modeling goal. Choosing the right combination up front, rather than retrofitting labels onto a dataset designed for a different task, is one of the more consequential decisions in any micromobility annotation project.

Computer Vision Use Cases for Micromobility

Micromobility annotation supports several distinct production systems, each with different data requirements.

Urban Traffic Monitoring

City operators and infrastructure planners need accurate, continuous counts of micromobility vehicles to understand usage patterns, evaluate lane utilization, and guide investment decisions. Detection and tracking models trained on annotated data provide that baseline.

The annotation challenge here is scale and consistency: systems must perform reliably across dozens of camera viewpoints, lighting conditions, and traffic densities.

ADAS and Autonomous Driving

Advanced driver assistance systems and autonomous vehicles must detect and predict the trajectories of scooters and bicycles in real time. These vehicles change direction rapidly, occupy narrow gaps between cars, and behave less predictably than motorized traffic. High recall and precise localization are not preferences in this context; they are safety requirements.

Research on micromobility detection in urban traffic specifically highlights the inadequacy of detection pipelines designed around larger vehicle classes when applied to scooters and e-bikes.

Fleet Management

Shared mobility operators use computer vision to monitor their fleets: detecting improperly parked vehicles, identifying safety violations such as riders without helmets where regulations require them, and tracking vehicle condition.

These systems require fine-grained classification and attribute annotation because it is not enough to detect a scooter; the system must determine whether it is correctly docked, damaged, or being used in violation of service terms.

Urban Planning

Aggregated vision data gives urban planners an objective basis for evaluating infrastructure decisions. Do protected bike lanes reduce conflict events with pedestrians? Where do scooter riders deviate from designated paths? Which intersections present the highest risk for micromobility users?

Answering these questions requires annotated datasets that capture not just vehicle positions but behavioral interactions and path context.

In every case, annotation quality determines system reliability. Poor labeling leads to biased models, undercounted vehicles, and unsafe predictions; these outcomes carry real consequences for riders and the public.

Challenges in Micromobility Annotation

Micromobility introduces a set of technical and operational challenges that standard vehicle annotation workflows were not designed to handle.

Small Size

A distant e-scooter may occupy only a few dozen pixels in a high-resolution frame. Standard anchor configurations and feature pyramid strategies struggle to detect these objects consistently. Annotation teams must place labels with higher precision than they would for cars or trucks, and the margin for error is smaller.

Occlusion

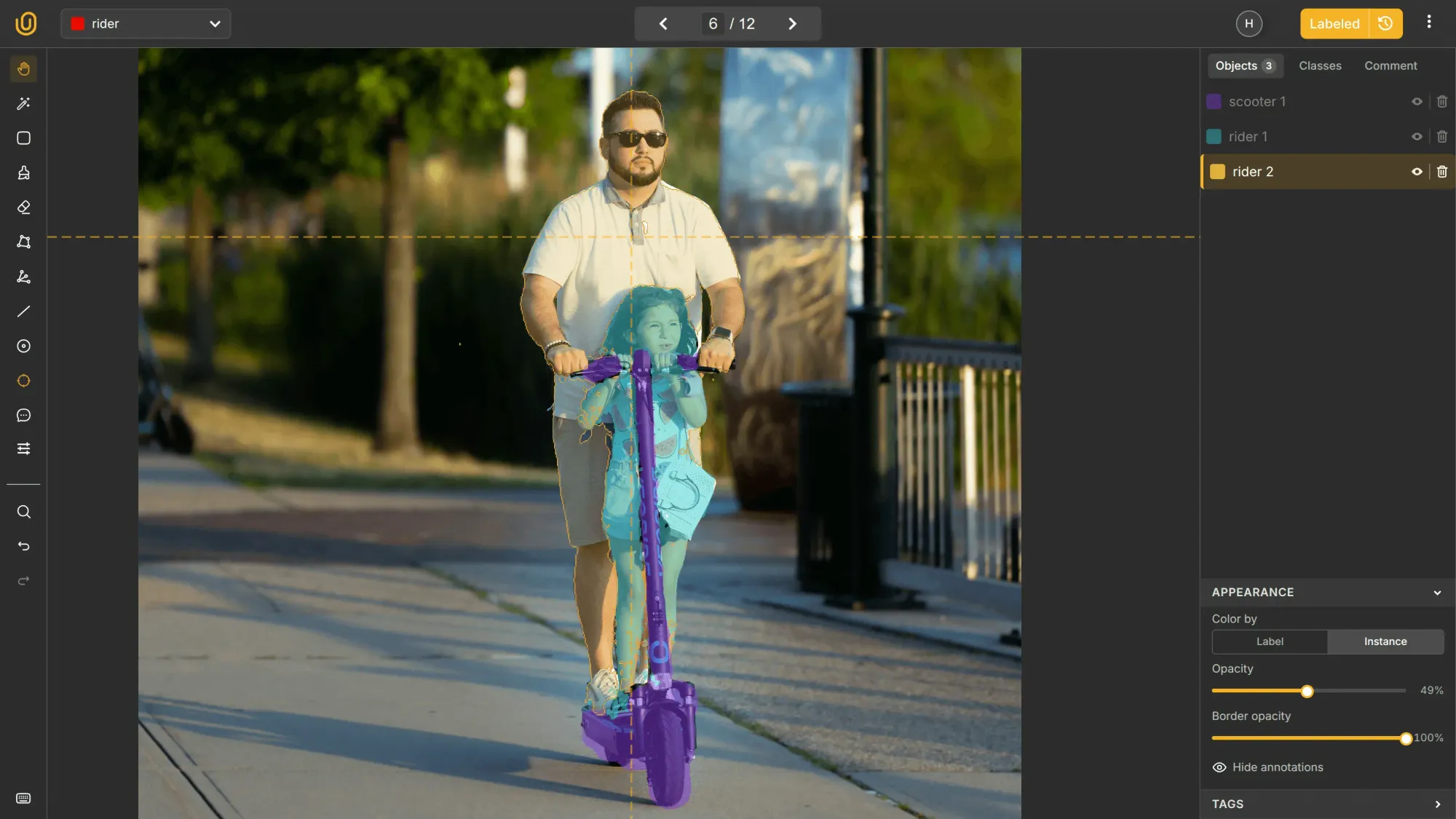

Partial occlusion is frequent and severe. A scooter may be hidden behind a parked car, a tree, another rider, or a street fixture. Annotation teams need clear, enforced policies for how to label partially visible objects and how to handle truncation at frame edges.

The current best practice is to annotate occluded objects as if they were fully visible, inferring the complete shape from the visible portion. Consistency across annotators on this point is critical, because inconsistency here directly degrades tracker performance.

Shape Variety

Micromobility vehicles do not share a common silhouette. A rider standing upright on a scooter produces a thin vertical profile. A cyclist leans forward into a compact form. A cargo bike extends horizontally. Add a backpack, a delivery box, or a second passenger, and the geometry changes again.

Models trained primarily on the rectangular profiles of cars underperform on these irregular shapes unless the training data explicitly includes this variability.

Class Ambiguity

Behavioral context matters for classification. A pedestrian pushing a scooter is not the same object class as a rider on one, but without behavioral attributes captured in the annotation, models collapse these into a single category.

That ambiguity reduces interpretability, which in turn reduces the usefulness of detection outputs for downstream systems that need to distinguish between vehicle states.

Environmental Variability

Rain, low light, glare, lens flare, and shadows all degrade visual clarity and create inconsistency across annotation sessions. Labeling guidelines must address each condition explicitly, defining how annotators should handle degraded frames rather than leaving it to individual judgment.

Motion Blur

Fast-moving riders produce blurred frames, particularly in adverse weather or with lower-quality cameras. Precise polygon placement and segmentation become significantly harder under blur. Guidelines must specify when to annotate blurred objects, when to skip frames, and how to handle the boundary cases.

Edge Cases

Micromobility edge cases are numerous and unavoidable: two riders on a single scooter, a vehicle lying on its side, a rider carrying oversized cargo, a bicycle being pulled rather than ridden.

Each scenario, if not explicitly covered in the annotation schema, introduces noise into the training data. Defining and labeling these cases in advance is preferable to discovering them as model failures after training.

Without structured quality assurance processes and precise annotation guidelines, label drift accumulates across these dimensions, degrading dataset quality in ways that compound over time.

Best Practices for Micromobility Annotation

Define a Detailed Taxonomy

Generic classes produce generic models. Distinguish between bicycle, e-bike, e-scooter, cargo bike, and rider-with-scooter-on-foot at the taxonomy level. Define how to handle ambiguous edge cases before annotation begins, not after annotators have already made inconsistent decisions across thousands of frames.

Annotate Video When Possible

Video-based annotation reduces frame-to-frame label noise and provides the temporal supervision that tracking models require. When the downstream task involves trajectory prediction or behavior classification, image-only datasets are a structural limitation regardless of their size.

Combine Bounding Boxes with Segmentation

Bounding boxes provide volume; segmentation provides precision. For thin structures like scooter frames and handlebars, and for heavily occluded scenes, segmentation masks produce meaningfully better model performance. The additional annotation cost is justified for any task where spatial accuracy matters.

Build for Diversity

A dataset that covers only good weather, daytime, low-density traffic, and standard vehicle configurations will produce a model that fails in the conditions that matter most. Explicitly plan for diversity across urban layouts, lighting conditions, weather, rider demographics, and vehicle configurations.

If the distribution of training data does not reflect the distribution of real-world deployment conditions, the performance gap will be predictable and avoidable.

Use AI-Assisted Pre-Annotation

Model-generated predictions reduce the manual effort required per frame. Annotators review and correct rather than create from scratch, which improves throughput without sacrificing accuracy. The human validation step is non-negotiable because pre-annotation accelerates the process, not replace judgment.

Implement Multi-Stage Quality Control

A single review pass is insufficient for complex micromobility datasets. Effective QA catches inconsistent labeling across annotators, misclassified behaviors, invalid polygons, and missing edge case labels. Peer validation and targeted checks for rare scenarios reduce systematic bias before it becomes entrenched in the dataset.

HITL Approach to Data Labeling

A structured annotation platform provides many of these processes out of the box. Speaking of them, let's talk about Unitlab AI, a fully-automated data platform.

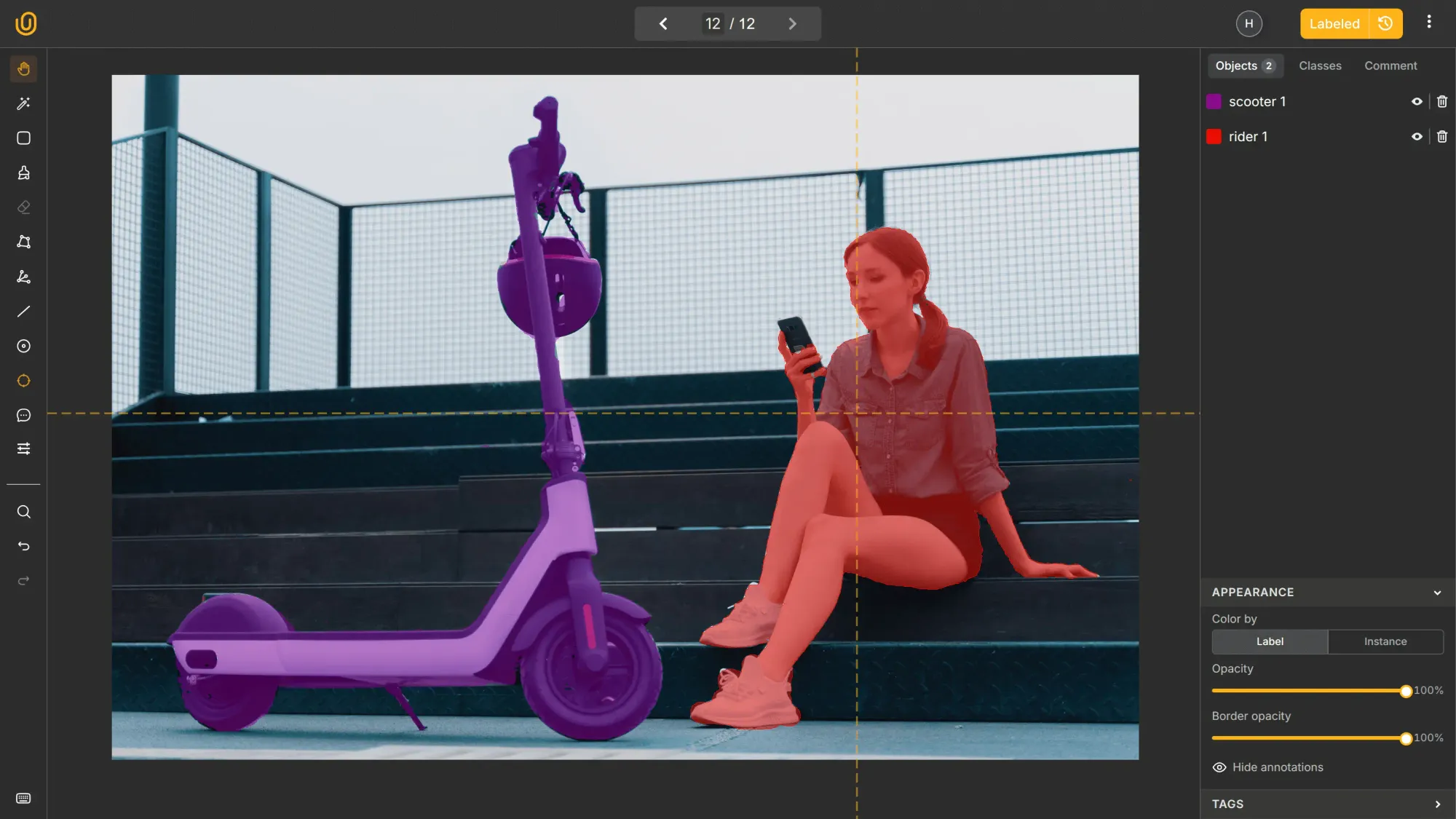

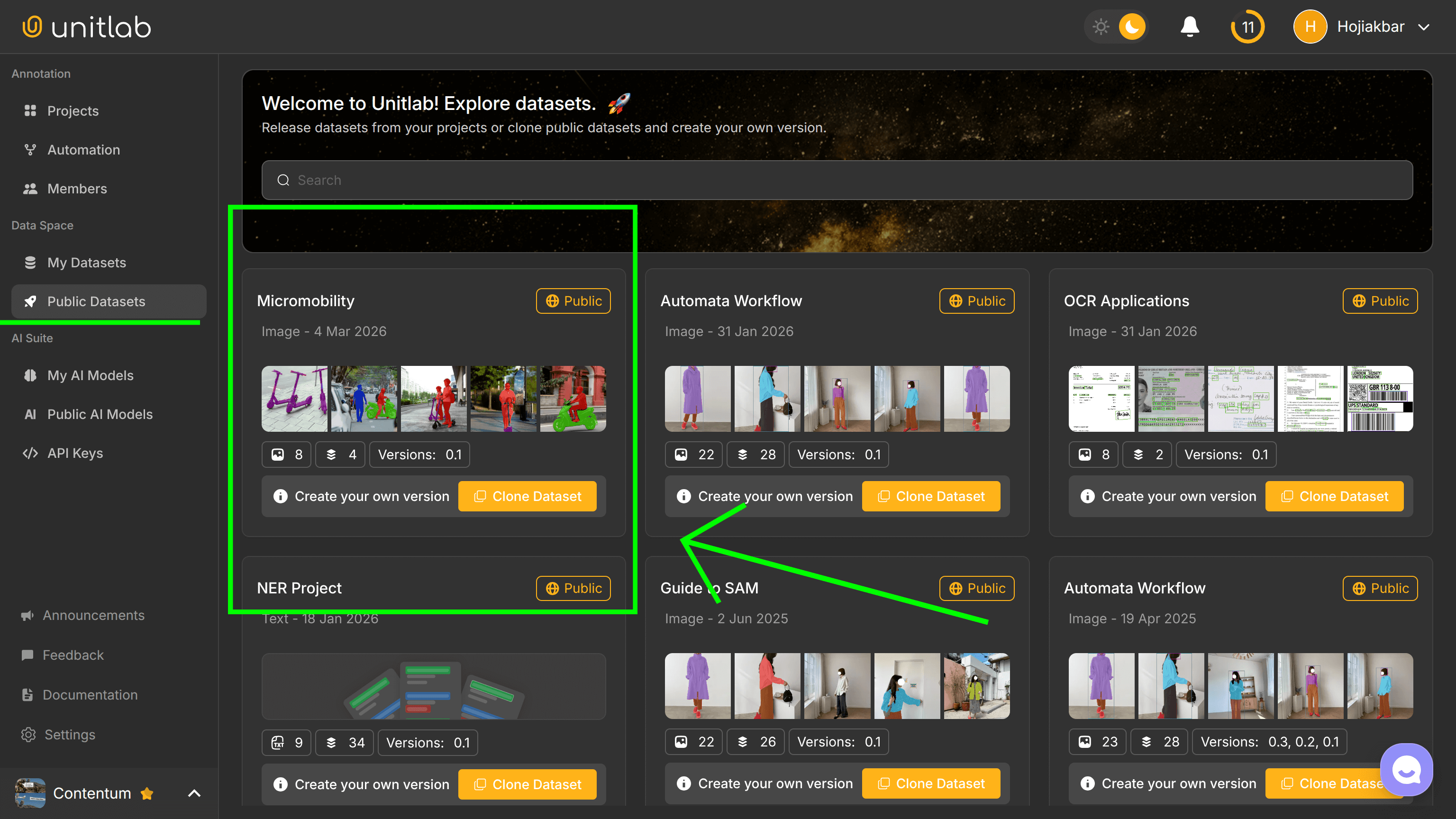

We also released a dataset of annotated micromobility images used in this post in our platform, which you can access with a free account right now:

Unitlab AI for Micromobility Annotation

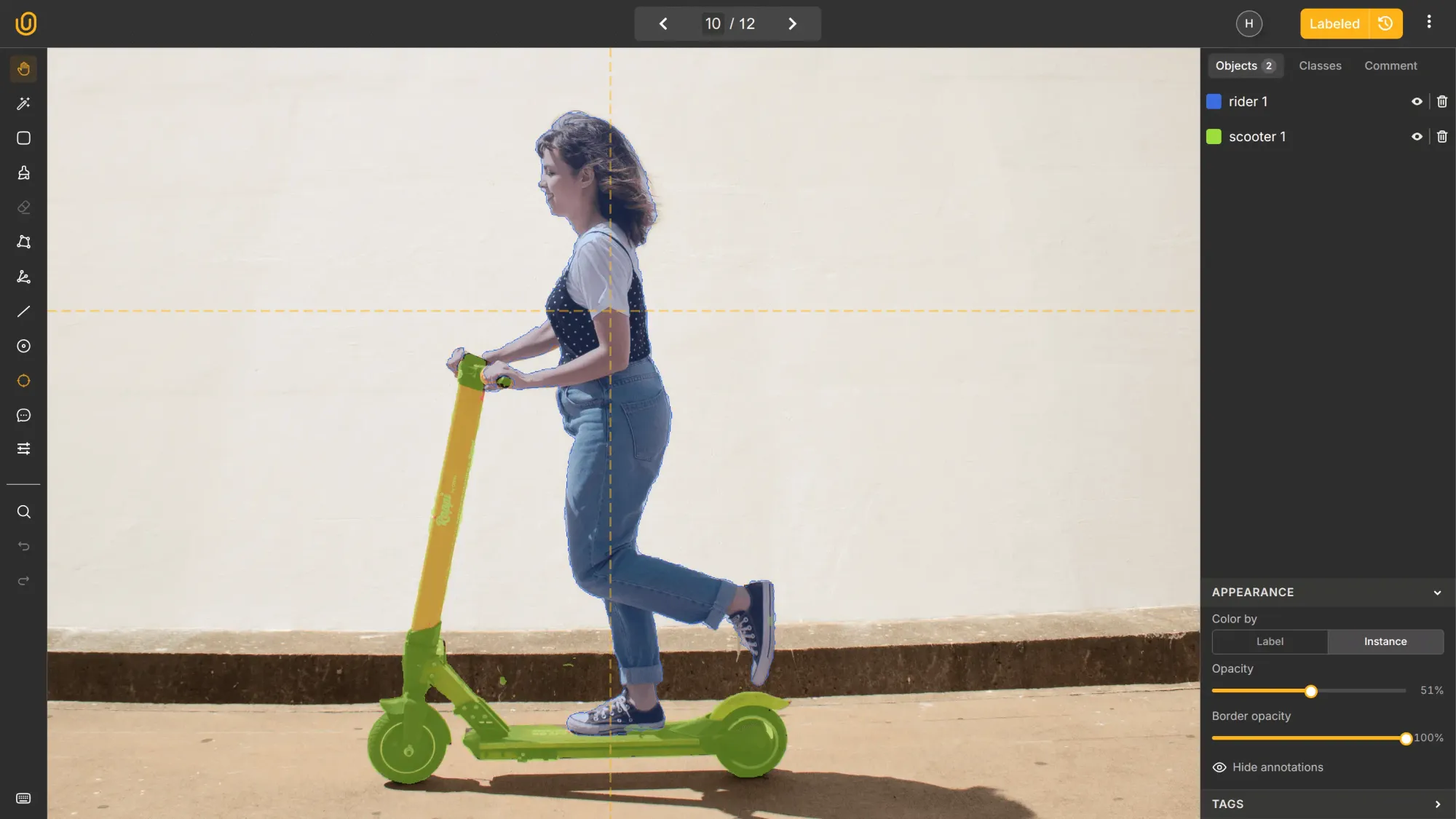

Unitlab AI provides an integrated environment for building high-quality micromobility datasets. Bounding boxes, polygon segmentation, keypoints, and video annotation are all supported within a single collaborative interface, with no need to manage separate tools for different label formats.

Custom ontologies for e-scooters, e-bikes, cargo bikes, rider states, and textual elements such as license plates or fleet identifiers can be configured through hierarchical class and attribute structures without engineering work.

AI-assisted tools including Magic Crop and SAM-based Magic Touch generate initial predictions that annotators refine and reviewers validate within the same system. That human-in-the-loop workflow reduces cost and project timeline while maintaining consistency across thousands of frames.

Dataset management features cover cloning, versioning, and structured export compatible with standard object detection, segmentation, tracking, and OCR pipelines.

For teams building perception systems for autonomous vehicles, traffic monitoring, or mobility platforms, this reduces the operational overhead between annotation and training.

Future Trends in Micromobility Computer Vision

The near-term trajectory of micromobility perception points in a few clear directions.

Multi-sensor fusion will become standard. Combining LiDAR, radar, and camera data improves detection of small and partially occluded objects in ways that single-sensor systems cannot match. As sensor hardware costs fall and autonomous vehicle platforms mature, annotated 3D and multimodal datasets will become the baseline expectation rather than a premium option.

Self-supervised learning may reduce the volume of fully labeled data required for initial model training. The annotation requirement will not disappear (high-quality labeled data remains essential for evaluation, benchmarking, and fine-tuning), but the ratio of labeled to unlabeled data required to reach a given performance threshold may improve.

Behavior prediction will demand more from annotation. As systems move from detecting what is present to anticipating what will happen next, the labels that matter are not just bounding boxes and class IDs but posture, intent signals, gaze direction, and interaction context. That requires annotators with deeper domain knowledge and annotation schemas with more expressive attribute structures.

Regulatory pressure is also coming. As cities establish safety performance thresholds for vulnerable road user detection, a category that includes micromobility riders, vision systems will need to demonstrate compliance against defined regulatory benchmarks. That creates a direct institutional incentive for higher annotation quality and more standardized dataset formats.

Conclusion

Micromobility is no longer a peripheral category in urban transportation. It is a core part of how cities move, and perception systems that cannot handle it reliably are already falling behind.

The annotation challenges are real: small objects, heavy occlusion, geometric variability, behavioral ambiguity, and a long tail of edge cases that standard workflows were not designed to address. Meeting those challenges requires detailed taxonomies, format diversity, explicit quality control, and tooling built for the task.

The investment is justified. Accurate micromobility annotation is the foundation for every downstream system (detection, tracking, behavior prediction, compliance monitoring) that the industry will depend on as adoption continues to grow. Getting the data right now determines how reliably those systems perform later.

Unitlab AI can help you build modern vision systems that can handle micromobility even in the busiest streets.

Explore More

- Bounding Box Essentials in Data Annotation with a Demo Project

- Guide to Pixel-perfect Image Labeling with a Demo Project [2026]

- What is LiDAR: Essentials & Applications

References

- Khalil Sabri et. al. (May 28, 2024). Detection of Micromobility Vehicles in Urban Traffic Videos. Conference on Robots and Vision: Source

- McKinsey & Company (Apr 29, 2025). What is micromobility? McKinsey & Company: Source

- Inna Nomerovska (Oct 07, 2021). Making E-scooters Safer with Data Annotation. Keymakr: Source

- Tatiana Verbitskaya (Jan 30, 2026). Micromobility annotation. Keymakr: Source

- Wikipedia (no date). Micromobility. Wikipedia: Source